The intuition that political polarization is caused by lack of access to dissenting views has much to recommend it. First of all, if you don’t know what these views are, you can’t learn about them. Second, if you only know strongly dialectical or distorted (straw man) versions of them, you’re unlikely to find your opponents to be reasonable people with plausible views. The obvious antidote to this would seem to be to sit and listen to dissenting voices in their own words. Let’s call this view, “the horse’s mouth” Looking into the horse’s mouth will have a moderating effect; for, people are eminently reasonable, so if you just listen to them in their own reasonable words you’ll be compelled to admit that (and so abandon your polarized, straw man versions of their view).

Now comes science to spoil everyone’s intuitions. Some political scientists have tested whether this decreases polarization. The long and the short of it is that it doesn’t and it may (though this result was within the margin of error) increase it. From their paper:

Social media sites are often blamed for exacerbating political polarization by creating “echo chambers†that prevent people from being exposed to information that contradicts their preexisting beliefs. We conducted a field experiment that offered a large group of Democrats and Republicans financial compensation to follow bots that retweeted messages by elected officials and opinion leaders with opposing political views. Republican participants expressed substantially more conservative views after following a liberal Twitter bot, whereas Democrats’ attitudes became slightly more liberal after following a conservative Twitter bot—although this effect was not statistically significant. Despite several limitations, this study has important implications for the emerging field of computational social science and ongoing efforts to reduce political polarization online.

This is disappointing in part because things were looking good for the horse’s mouth view. For it has recently been shown that another representationalist paradox–the backfire effect–had failed to replicate. In the “backfire effect” study, Brendan Nyhan and Jason Reifler found that attempts to correct mistaken information would backfire in certain circumstances. The idea, in other words, is that exposure to facts is not sufficient for correction and may in fact make one retrench.

Naturally, we should be cautious with such results, as the authors themselves warn:

Although our findings should not be generalized beyond party-identified Americans who use Twitter frequently, we note that recent studies indicate this population has an outsized influence on the trajectory of public discussion—particularly as the media itself has come to rely upon Twitter as a source of news and a window into public opinion (47).

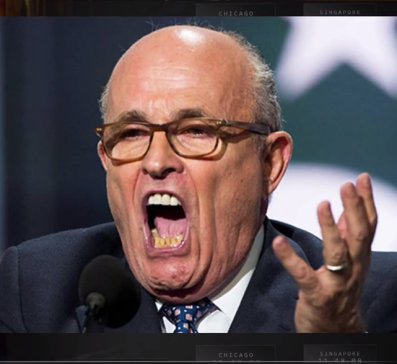

In closing here I might venture a hypothesis for why people didn’t moderate their view. Prominent politicians on Twitter, from what I’ve observed, produce content for a partisan audience. Often that partisan audience is already polarized and it isn’t particularly well-informed. Content that appeals to them, viewed by an observer, might only tend to confirm the worst views about them. If you see a bunch of tweets urging you to “lock her up,” you can hardly be blamed for thinking them to be idiots.